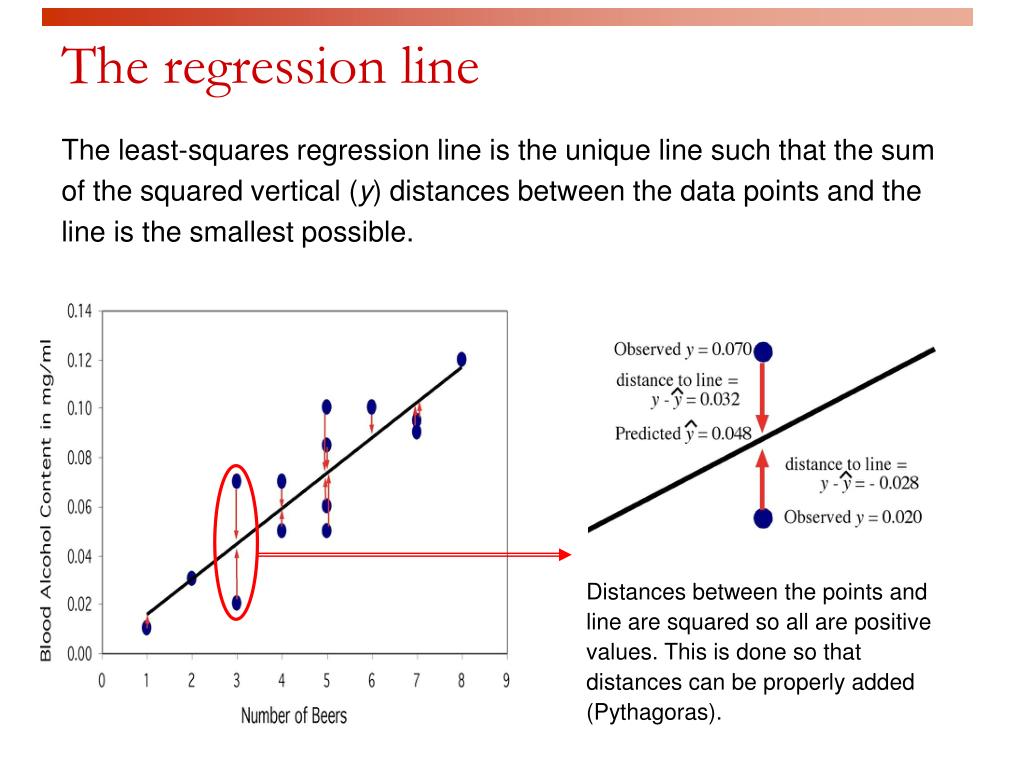

Enter your data as (x, y) pairs, and find the equation of a line that. The sum of the squares of the offsets is used instead of the offset absolute values because this allows the residuals to be treated as a. To emphasize that the nature of the functions g i really is irrelevant, consider the following example. Least Squares Regression is a way of finding a straight line. A mathematical procedure for finding the best-fitting curve to a given set of points by minimizing the sum of the squares of the offsets ('the residuals') of the points from the curve. The resulting best-fit function minimizes the sum of the squares of the vertical distances from the graph of y = f ( x ) to our original data points. , B m-once we evaluate the g i, they just become numbers, so it does not matter what they are-and we find the least-squares solution. Least Squares Regression is a way of finding a straight line that best fits the data, called the 'Line of Best Fit'. Step 4: Find the value of slope m using the above formula. Step 2: In the next two columns, find xy and (x) 2. Step 1: Draw a table with 4 columns where the first two columns are for x and y points. We evaluate the above equation on the given data points to obtain a system of linear equations in the unknowns B 1, B 2. Following are the steps to calculate the least square using the above formulas. Indeed, in the best-fit line example we had g 1 ( x )= x and g 2 ( x )= 1 in the best-fit parabola example we had g 1 ( x )= x 2, g 2 ( x )= x, and g 3 ( x )= 1 and in the best-fit linear function example we had g 1 ( x 1, x 2 )= x 1, g 2 ( x 1, x 2 )= x 2, and g 3 ( x 1, x 2 )= 1 (in this example we take x to be a vector with two entries). That best approximates these points, where g 1, g 2. This is denoted b Col ( A ), following this notation in Section 6.3. This statistics video tutorial explains how to find the equation of the line that best fits the observed data using the least squares method of linear regres. Hence, the closest vector of the form Ax to b is the orthogonal projection of b onto Col ( A ).

In other words, Col ( A ) is the set of all vectors of the form Ax. Recall from this note in Section 2.3 that the column space of A is the set of all other vectors c such that Ax = c is consistent. Suppose that the equation Ax = b is inconsistent. In other words, a least-squares solution solves the equation Ax = b as closely as possible, in the sense that the sum of the squares of the difference b − Ax is minimized. So a least-squares solution minimizes the sum of the squares of the differences between the entries of A K x and b. The term “least squares” comes from the fact that dist ( b, Ax )= A b − A K x A is the square root of the sum of the squares of the entries of the vector b − A K x. Recall that dist ( v, w )= A v − w A is the distance between the vectors v and w. Form the augmented matrix for the matrix equation A T Ax A T b, and row reduce. Here is a method for computing a least-squares solution of Ax b : Compute the matrix A T A and the vector A T b. Let A be an m × n matrix and let b be a vector in R n.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed